You Write Like a Machine. That's Good.

I spent ten minutes fixing the logic of an article. The AI score jumped from 31% to 67%. Churchill failed the same test. Welcome to the Failurors Club.

AI detection is sold as a truth machine. It is not. It is a mirror pointed at the wrong face. This piece is about what it actually reflects, and why you should stop flinching. Essentially, who has the right to determine whether a person's style is too human or too AI?

Before we start, let me tell you a story written around 250BCE, by Han Fei. It described a merchant in the state of Chu (southern China) was selling two things: a spear, and a shield. He boasted that his spear could pierce any shield. He also boasted that his shield could block any spear. Then a bystander asked the obvious question: what happens when your spear meets your shield?

That, is what I'd love to discuss today.

I'd say, whoever has the right, it shouldn't be the one who also sells humanization to you.

Deprived Career Opportunity as an Editor

Let me start by telling you what happened on a Tuesday morning.

Someone sent me an English article around 1,110 words. She told me that the AI detector had given it a 31% likelihood of this article written by AI. Knowing her teacher will throw the piece in the detector, she felt nervous.

'Could you take a look?' she asked, 'I don't know why the score is so high'.

Of course I can take a look. I spent roughly ten minutes on it, mainly reading through her arguments and fixing some minor logic sequences. Kind of made A lead to B, made the argument actually land, you know, those type of things. I did not touch vocabulary (which is smaller than hers to start with), or grammar (my weakest link), but I did fix one spelling mistake and I was feeling good about it.

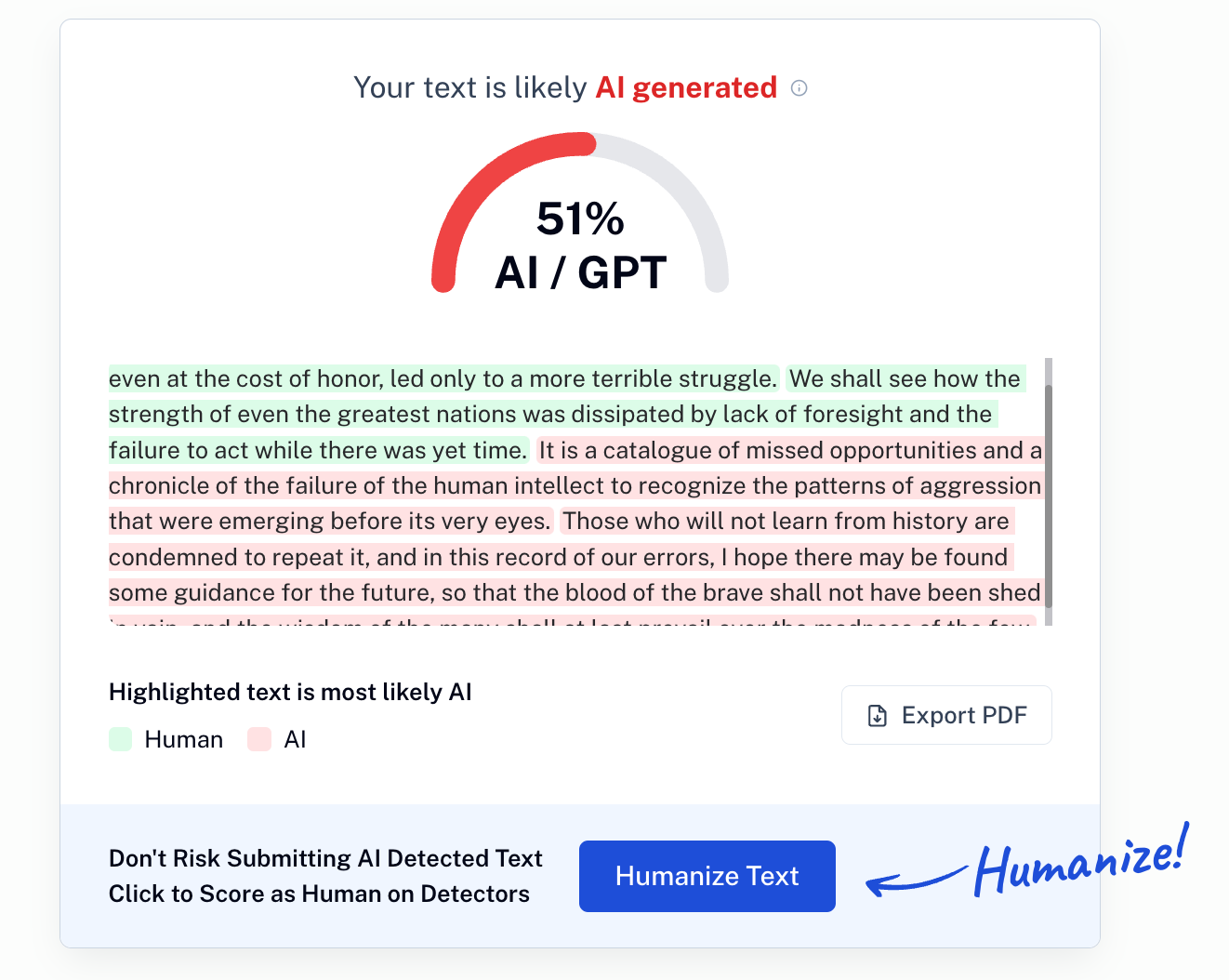

She ran it again. 67%. AI detector was sure it was written by GPT.

Ohh boy.

I used to believe I'm human.

The Crime of Coherence

It took me two cups of coffee to calm down. And here is what I come up with:

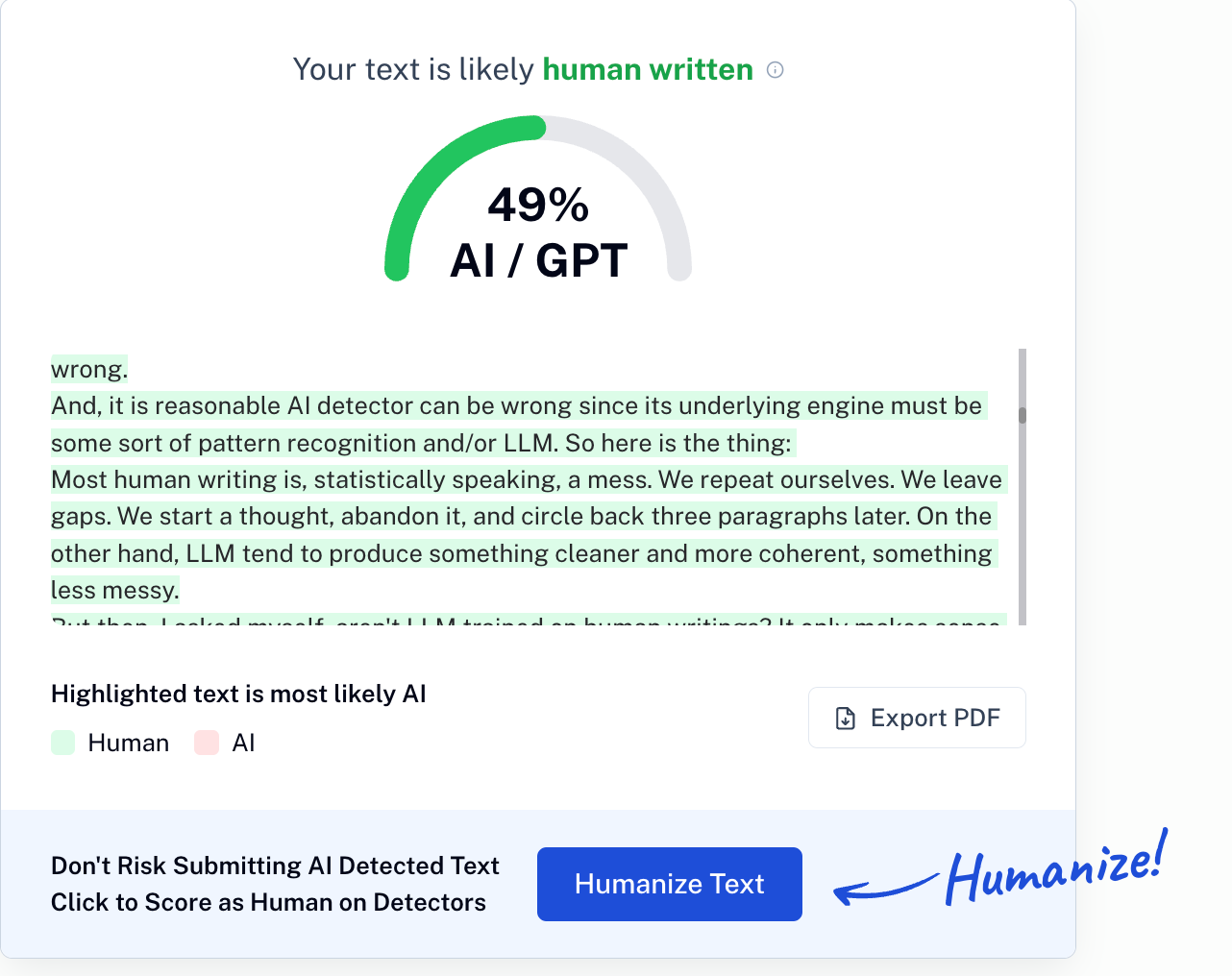

- I am obviously human since machines don't consume coffee. So AI detector was wrong.

- And, it is reasonable AI detector can be wrong since its underlying engine must be some sort of pattern recognition and/or LLM. So here is the thing:

Most human writing is, statistically speaking, a mess. We repeat ourselves. We leave gaps. We start a thought, abandon it, and circle back three paragraphs later. On the other hand, LLM tend to produce something cleaner and more coherent, something less messy.

But then, I asked myself, aren't LLM trained on human writings? It only makes sense that the works from famous authors or Nobel literature winners should be given a higher weight in training. So, what would LLM say about their writings?

So I fed the system Churchill.

So here it is, another 'AI being' is caught. The man who held the English language together with his bare hands during the worst years of the twentieth century did not write human enough.

I have to say, having Winston as my fellow failurors doesn't feel that bad.

Humanizer: The Incentive

Let's not be naive about why this industry exists. The needs are real: teachers want to make sure homework is done by students, not GPT. Knowing their teachers will run detectors against their homework, students preemptively adopt the technology.

A detector that cleared most articles would have no product to sell. The threshold is set where it is for a reason: to sell humanize.

It is interesting to notice that sometimes detection is free, but humanize is always a paid feature. I guess I'd panic if my homework is due in 30 min and I got a 67%. I'd pay.

Here is the loop in full:

Step one. Your article scores 31% AI likelihood. You are not sure why, but it sounds bad. You ask your trusted advisor to take a look, and she makes the score soar to 67%. You are told it reads like AI/GPT now, you panic.

Step two. You purchase the humanizer. It rewrites your prose into something warmer, looser, more natural. More human, maybe. It also gives you an option of 're-humanize' for free.

Step three. You read the output. The logic is gone. The argument has been sanded down into something that sounds like a person who is trying very hard to sound like another person.

Step four. You spend ten minutes fixing the logic. You run the detector again. You are back in the AI realm.

The loop is quite desperate. It was designed to sell 'humanization'. The sword and the shield are both invincible in this case. The company that sells you the detector and the humanizer already knows this. They are counting on you not to notice.

The Ultimate Irony – Human Write Like AI Is Not Even Hard

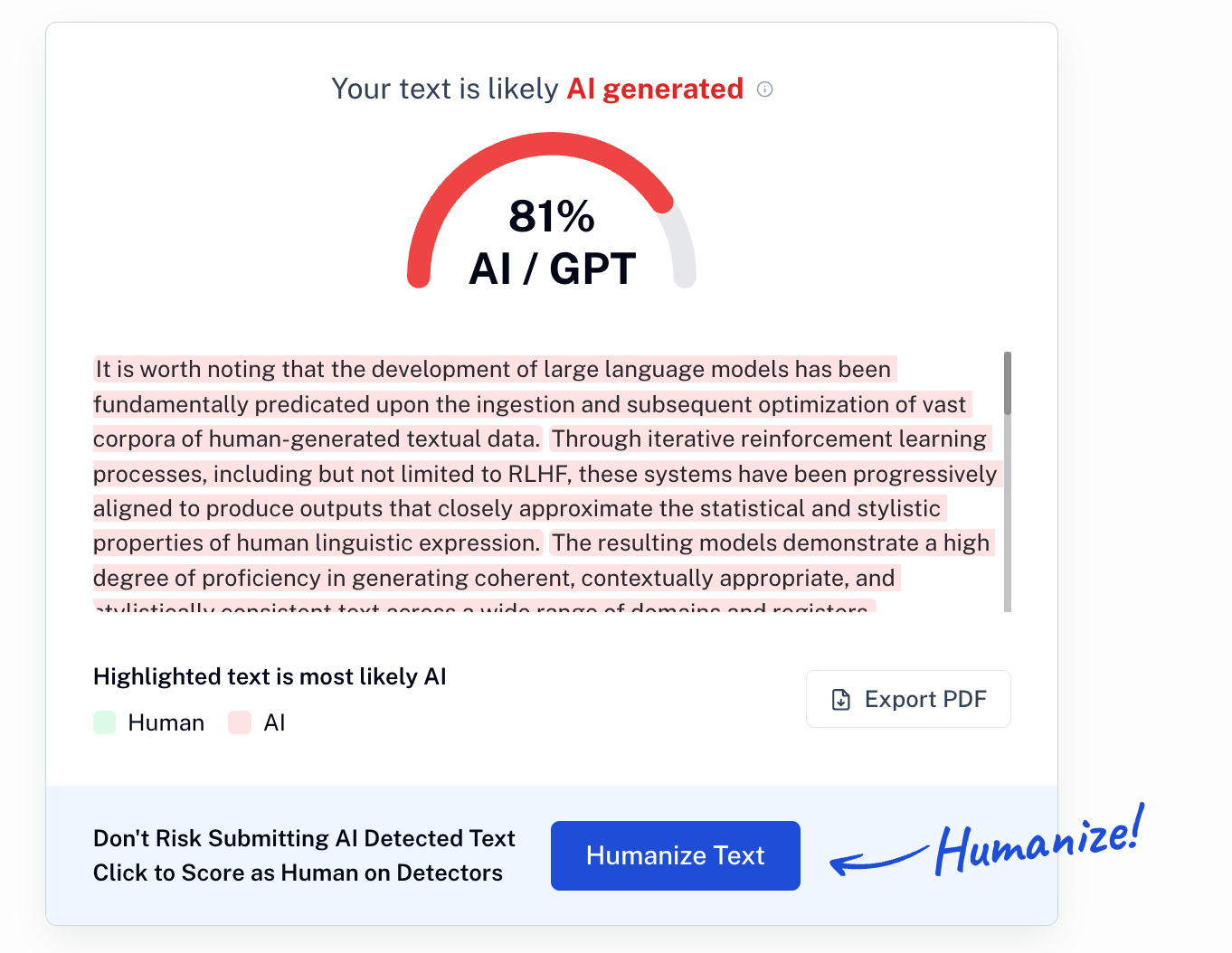

It is worth noting that the development of large language models has been fundamentally predicated upon the ingestion and subsequent optimization of vast corpora of human-generated textual data. Through iterative reinforcement learning processes, including but not limited to RLHF, these systems have been progressively aligned to produce outputs that closely approximate the statistical and stylistic properties of human linguistic expression. The resulting models demonstrate a high degree of proficiency in generating coherent, contextually appropriate, and stylistically consistent text across a wide range of domains and registers.

Given this foundational context, the emergence of AI detection as a distinct technological category presents a compelling paradox. The very features that detection systems identify as indicative of AI-generated content, namely logical coherence, structural consistency, and lexical precision, are precisely the features that the underlying language models were explicitly trained to replicate from human sources. Consequently, the classification boundary between human and AI authorship becomes increasingly indeterminate, as the optimization target of the generative system and the detection criteria of the evaluative system converge upon an identical set of textual properties.

The practical implications for human authors are significant. Individuals who demonstrate above-average proficiency in logical argumentation, syntactic consistency, and structured prose composition are disproportionately likely to receive elevated AI probability scores, irrespective of the actual provenance of the text in question.

The only variable is where the line is drawn. If that's gamed, it would be easy for human to 'write like AI', or vice versa. So we are back to the old question: what happens when the sharpest spear meets the strongest shield?

The merchant had no answer.

He still doesn't. But he just rebranded as a SaaS company selling humanization services to kids.

In many cases, the model writing our draft, the model judging our draft, and the model humanizing the draft are architecturally identical. Someone charged us three times for the same spear, shield and upgrade service.

We did this to ourselves.

Let me show you the AI score for this section, BTW, so that you know I'm not bluffing about it is easy for human to write like AI

A Word to the People Running the Tests

To the teachers and editors: read the work.

I understand the appeal of the shortcut. There is a lot of writing coming in, and not enough time. A percentage feels efficient, and it has to be objective since it's third party.

It is neither.

A high AI score tells you the writing is coherent. It tells you the argument flows. It tells you nothing about whether a human thought the thoughts. If you trust a percentage more than your own reading, you have already handed your judgment to a machine. The thing you were trying to catch has already won.

Read for the idea. If the idea is there, the origin of the prose matters much less than we are pretending it does.

To the creators: stop apologizing.

If your own work has a high score, that is not an indictment. That is a signal that you write with structure and intention. The machine spent billions of dollars learning to do what you do naturally. You were already at the finish line while it was still learning to crawl.

Don't dumb it down. Don't hollow out the logic to satisfy an algorithm. Don't let a commercial product define the ceiling of your thinking. The only thing you might want to do is to make it more lively, more fun, and more you.

Wear the score. It means you still know how to think.

Previously, a version of this argument appeared in Chinese on this blog, prompted by a very real Tuesday morning and the sadness that I would never be asked to edit someone's articles.

p.s. if you wonder the AI score for this entire piece:

I am more human than Winston, after all. Even though I was more AI for a specific section purposefully.