Chatting With The Oracle #1: There Is No Hallucination. There Is Only the Oracle.

AI agents are being deployed to make real-time decisions, but they have no reliable sense of when they are and what they utter. That's not a minor bug. It's a fundamental problem for anything we ask them to do autonomously.

Hallucination is used to describe AI pretends it knows when it does not. Well, that's troublesome. It implies two modes exists for LLM: knowing and not-knowing. It also suggests the model slips into fabrication when it does not know.

The framing is wrong, and the wrongness matters.

This piece is to reframe it, through something we are familiar with for thousands of years, and are seeing in modern culture.

I read an interesting article today. It is certainly well-written, and it did trigger me to think more around hallucination.

“Hallucination is when an AI gives out wrong answers with absolute confidence. No hesitation. A wrong answer stated as fact… when an AI doesn’t know something, it doesn’t say ‘I don’t know.’ It generates what sounds like a correct answer because that’s literally what it was trained to do.” — If You Understand These 5 AI Terms, You’re Ahead of 90% of People

This is almost a textbook explanation. We find some version of it in almost every AI explainer written in the last three years. It makes sense, and it reminds us of THAT classmate/coworker who hallucinate with confidence.

Sure. If we see it often in human life, surely AI can get it through training.

Except if we pull on one thread, the whole framing begins to come apart.

Knowing or not knowing, is the question.

The word hallucination has never been neutral. From its Latin root, alucinari, it carried the sense of wandering in mind, of straying from the path, of being lost in one's own noise. To call what an LLM does hallucination is to import all of that. It implies two modes exist: knowing and not-knowing. It suggests the model slips into fabrication when it finds itself in the second.

The explanation assumes that an AI model has two modes. The knowing mode will retrieve something real. Yet there is also a not-knowing mode, where it fabricates instead. Hallucination, in this frame, is what happens when the second mode shamelessly masquerades as the first.

But what if there is no knowing, hence there’s no not-knowing?

That’s where our current LLM is at. Most of the models are based on something called transformer, developed several years ago. It is essentially a text in, text out machine. In this machine, ‘knowing’ is a foreign concept.

It only has one process, running the same way every time. A language model reads the pattern of what came before and predicts what comes next. It does this whether the output turns out to be accurate or wildly incorrect. The process does not change. The process does not know which outcome it is producing, especially when it is in the process of producing it.

Fabricating something is not the model realizing it lacks knowledge and reaching for invention instead. It is the same prediction, landing somewhere reality does not confirm.

Or, maybe we can say LLM fabricates everything. After all, there is no embodiment, and LLM is never fed the consequences.

Let’s not forget that LLM has no skin in the game. It gains nothing by shamelessly pretending, and it never did.

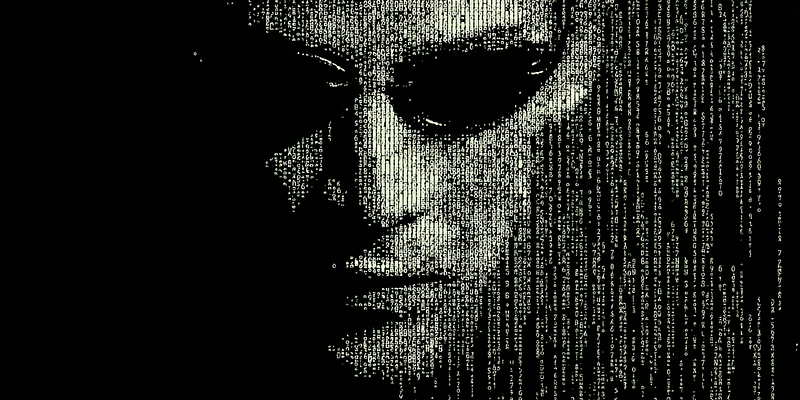

The Oracle

Let me introduce you to The Oracle, who lived in Delphi waiting for kings to appear, or in China busy reading burned turtle bones, or in New Orleans in a smoky room.

We humans have always consulted oracles since the dawn of our civilization. Oracles read cracks in bones, remnants in tea cup, patterns in smoke, and configurations of stars. Future was, is, and always will be written in something we untrained human eyes can’t see. But something or someone, are able to speak to us: those who can read pattern and produce utterance.

There’s this statistic linkage between what is happening and what will happen the next second. Oracles are the teller, and the listeners always bring their own interpretation and their own stakes for their own subsequent decisions.

Nobody ever dared to asked whether the oracle knew the future. Well, maybe I’m wrong. Let’s rephrase it. Other than Neo, nobody ever challenged the oracle.

The Oracle in the Matrix no longer reads bones. She drinks coffee in a housing project kitchen, speaks in riddles that feel like warmth, and tells people difficult things in careful ways.

She told Neo: no, you are not the one.

She was right then, but she’s wrong later. Let’s not forget she also told Trinity she’d fall in love with a dead man. So she was more right…I guess.

Except we’d never know whether the future is the result of her knowing, or her knowing itself.

The Lottery and The Oracle

Now, let’s go visit the oracle for a critical question: am I the one that will win the mega lottery this Saturday?

Let’s assume she’s receiving everyone in the world, and what she tells everyone who walks through her door is some version of this:

No, you are not the one.

There couldn’t be a more accurate statement. When the result comes out, all shall cheer, ‘the Oracle is right again!’, except one person or a few people who actually win.

And that might be precisely what happened to Neo.

In any given population of anomalies moving through the system, only one becomes the One. The oracle who tells each of them you are not the one is statistically almost bulletproof. It holds across nearly every case it is applied to.

What we call hallucination is simply what that statement looks like from Neo, the anomaly who made it through. Since all of us were focusing on Neo for that couple of hours, we think she’s bluffing.

Yet from inside the oracle’s process, nothing different happened. The same pattern was read. The same utterance was produced. Had the Oracle become the main character, we’d be sitting in her kitchen, watching her producing the same sentence to millions of programs. It would become the uttermost boring movie, but it would prove the oracle is indeed brilliant.

She was only wrong, once.

And here is the part that should sit with us a little uncomfortably. The oracle never knew. Not when she was right, and not when she was wrong. There is no internal signal that distinguishes the two.

Only Trinity knows.

Hallucination as the definition

This brings us to the real problem.

The model is doing what it does. It reads a pattern, it produces outcome based on probability, consistently and without pretense.

The problem, is the word we chose.

Hallucination implies a baseline exists. It implies that most of the time, the model is sober and all is fine. It is only occasionally that something breaks down. It might even give hope that if we train LLMs harder, hallucination could happen less frequently.

It imports the architecture of a malfunctioning mind that could be cured with medication, except hallucination can’t be cured by training. It is structural. Everyone who builds on the transformer inherits it. OpenAI’s own 2025 research confirms that the training itself rewards confident guessing.

(We are focusing on training, not post training. RLHF, RAG, and grounding techniques operate on outputs, not on the underlying prediction process. We will talk about post-training very soon. )

When we are trying to describe something that does not live in our cognitive dimensions, we humans tend to reach for the nearest familiar shape. We say AI is hallucinating because that is what it would be called if a person did it.

But let me remind us, that AI Is the Child of Probability, Humans Are Prisoners of Causality.

The word failed us with its rich context from human interaction. We are essentially trying to define a state based on the consequent result. We only call it hallucination in retrospect, when the outcome disappoints.

The same prediction that we call correct on Tuesday we would call hallucination on Wednesday if the facts had changed. The process was identical. A label that shifts with our expectations tells us nothing about the machine. It tells us only about ourselves.

There is but one state. We label part of it depending on what happens next, which implies there’s another unnamed part. That’s not a description of the machine. That’s a description of us.

What we need, perhaps, is not a better explanation of hallucination. What we need is the humility to admit that we have been naming something we do not yet have the right language for. If you want more readings, we kind of explored the naming and communication gap in The Two and a Half People Who Understood.

The oracle has always been with us. We understood it intuitively for centuries. Then we built a new one, dressed it in human clothing, and forgot what we already knew.

The bones do not know. Neither does the Oracle.

p.s. Let me try to explain why the name matters.

When we call false output hallucination, the model becomes the apparent site of failure. The people and institutions that built it, deployed it, verified nothing, and put it in front of users... well, they recede from view. The real failure happened somewhere in the chain between utterance and use. And in that somewhere, a human or institution made a choice to not act. The word does that work quietly, without anyone confirming that's what we want.

But LLM only produces utterances. Utterances require interpretation. Interpretation requires someone to check and authorize. Someone who actively decides what the utterance is allowed to become is accountable. The model is not.

The bones do not know. Neither does the Oracle. That's why priests are needed. Someone needs to verify the utterances before we act on them. Keep calling it hallucination, and the question of who is responsible never has to be asked.

That's why.

Now it appears that I will start a series about the Oracle. Let's see how far I can go.

The second piece: Chatting With The Oracle #2: The AI That Doesn’t Know What Time It Is